Introduction

This comprehensive guide is designed to help your organization successfully implement and adopt LaunchDarkly feature flags as part of your Center of Excellence initiative. Whether you’re a program manager setting objectives or a developer implementing SDKs, this guide provides structured, step-by-step guidance to ensure successful LaunchDarkly adoption across your development teams.

What You’ll Find Here

Program Management

Establish clear objectives, key results, and measurable success criteria for your LaunchDarkly implementation. This section helps program managers and leadership:

- Define OKRs aligned with business objectives

- Set up governance and best practices

- Track adoption metrics and success indicators

SDK Implementation

Technical guidance for developers implementing LaunchDarkly SDKs in their applications. This section includes:

- Preflight Checklists - Step-by-step implementation guides

- Configuration best practices

- Security and compliance considerations

- Testing and validation procedures

Getting Started

New to LaunchDarkly? Start with the Program Management section to understand the strategic approach and objectives.

Ready to implement? Jump to the SDK Preflight Checklist for hands-on technical guidance.

Looking for specific guidance? Use the search functionality to quickly find relevant information.

How to Use This Guide

This guide is structured as a progressive journey:

- Plan - Establish program objectives and success criteria

- Prepare - Complete preflight checklists for your technology stack

- Implement - Follow step-by-step SDK integration guides

- Validate - Test and verify your implementation

- Scale - Apply best practices across your organization

Each section builds upon the previous one, but you can also jump to specific topics based on your immediate needs.

Need Help?

- LaunchDarkly Documentation: launchdarkly.com/docs

- LaunchDarkly Developer Hub: developers.launchdarkly.com

- LaunchDarkly Help Center: support.launchdarkly.com

- LaunchDarkly Academy: launchdarkly.com/academy

Overview

This topic explains the objectives and key results for the LaunchDarkly Program. We recommend using the following guidelines when creating objectives and key results:

Objectives

Write objectives as clear, inspirational statements that describe what you want to achieve. Each objective should be:

- Measurable through specific key results

- Aligned with broader program goals

- Reviewed and updated regularly to reflect changing priorities

Limit each objective to three to five key results.

Key results

Key results measure progress toward your objective. They must be specific, measurable, and verifiable.

Use quantitative metrics:

- State exact numbers, percentages, or timeframes

- Define what you measure and how you measure it

- Include baseline values when comparing improvements

- Specify the time period for measurement

Focus on outcomes:

- Measure results, not activities

- Track what changes, not what you do

- Use leading indicators that predict success

- Include lagging indicators that confirm achievement

Ensure clarity:

- Use clear language that anyone can understand

- Avoid vague terms like “improve” or “better”

- Include specific targets or thresholds

- Define success criteria explicitly

Set realistic targets:

- Aim for 70% to 80% achievement probability

- Set stretch goals that require effort to reach

- Review historical performance to inform targets

Include time-bound elements:

- Set clear deadlines or timeframes

- Define measurement periods

- Align timing with objective review cycles

Active OKRs

This section contains the current OKRs for the LaunchDarkly Program.

Application teams onboard quickly and correctly with minimal support

Key Results

- Time to provision and access LaunchDarkly with correct permissions is less than one day per team

- Less than three hours of ad hoc support requested per team during onboarding

- Less than five support tickets created per team during onboarding

- All critical onboarding tasks complete and first application live in a customer-facing environment within two sprints

Tasks

To achieve this objective, complete the following tasks:

- Create self-service documentation with step-by-step guides and video tutorials when necessary

- Configure single sign-on (SSO) and identity provider (IdP) integration

- Define teams and member mapping

- Assign all flag lifecycle related actions to at least one member in each project

- Define how SDK credentials are stored and made available to the application

- Create application onboarding checklist and a method to track completion across teams

Team members find flags related to their current task unambiguously

Key Results

- More than 95% of new flags created per quarter comply with naming convention

- More than 95% of active users access dashboard filters and shortcuts at least once per month

- More than 95% of new flags created per quarter include descriptions with at least 20 characters and at least one tag

- More than 95% of release flags created per quarter link to a Jira ticket

- Zero incidents of incorrect flag changes due to ambiguity per quarter

Tasks

To achieve this objective, complete the following tasks:

- Create naming convention document

- Document flag use cases and when and when not to use flags

- Create a method to track compliance with naming convention

- Enforce approvals in critical environments

Team members de-risk releases consistently

Key Results

- Starting Q1 2026, 90% of features requiring subject matter expert (SME) testing released per quarter are behind feature flags

- More than 75% of P1/P2 incidents related to new features per quarter are remediated without new deploys

- Mean time to repair (MTTR) reduced by 50% compared to baseline for issues related to new features by end of Q2 2026

Tasks

To achieve this objective, complete the following tasks:

- Define and document release strategies:

- Who does what when

- How to implement in the platform using targeting rules and release pipelines

- How to implement in code

- Define and document incident response strategies

- Integrate with software development lifecycle (SDLC) tooling

- Enable project management

- Enable communication

- Enable observability and application performance monitoring (APM)

- Enable governance and change control

LaunchDarkly usage is sustainable with minimal flag-related technical debt

Key Results

- More than 95% of active flags have a documented owner at any point in time

- More than 95% of active flags have an up-to-date description and tags and comply with naming conventions at any point in time

- Median time to archive feature flags after release is less than 12 weeks

- 100% of flags older than six months are reviewed quarterly

- Flag cleanup service level agreements (SLAs) are established and followed for 100% of projects

Tasks

To achieve this objective, complete the following tasks:

- Implement actionable dashboards to visualize flag status including new, active, stale, and launched

- Define flag archival and cleanup policies

- Implement code references integration

Archived OKRs

This section contains previous OKRs and their outcomes.

Projects and environments

Map your applications and deployment environments to LaunchDarkly projects and environments. The key principle: fewer is better.

Quick answers

How many projects do I need? Start with one project per product or tightly coupled set of services. Only create separate projects when teams need complete independence.

How many environments do I need? Most organizations need 2-3 environments: Development, Staging, and Production. Use targeting rules to handle multiple deployment environments within these.

Data model

LaunchDarkly organizes feature flags using a hierarchical structure:

- Projects contain feature flags and environments

- Feature flags exist at the project level in all environments of that project

- Environments contain the targeting rules for each flag

- SDKs connect to a single environment and receive the state of every flag for that environment

Applications exist as a top-level resource outside of projects.

Key concepts

Projects group related applications that need to coordinate releases:

- Share feature flags across all applications in the project

- Enable prerequisites and approvals for coordinated launches

- Define who can access and modify flags

Environments represent stages in your development lifecycle:

- Each has its own targeting rules and approval workflows

- SDKs connect to one environment at a time

- Use targeting rules to consolidate multiple deployment environments

Next steps

- Learn how to map applications to projects

- Learn how to map deployment environments to LaunchDarkly environments

Projects

Default recommendation: Start with one project per product. Create separate projects only when teams have no release dependencies.

Decision framework

Use this framework to determine whether to create a separate project:

Use an existing project when

Code executes in the same process, application, or web page:

- Frontend and backend for a single-page application

- Multiple services in a monolith

- Components within the same web application

Applications are tightly coupled:

- Frontend depends on specific backend API versions

- Services communicate via internal APIs with hard dependencies

- Components must be released in lockstep

Create a separate project when

Applications are loosely coupled:

- Services communicate via public APIs with version management

- Services can be released independently

- Applications serve different products or business units

No coordination is needed:

- Applications have no release dependencies

- Teams work independently with no shared releases

- Applications serve different customer segments

Key benefits of shared projects

Sharing projects for tightly coupled applications provides:

- Prerequisites for coordinated releases across frontend and backend

- Approvals for collaborative change management

- Single pane of glass for release visibility

Important: Each flag needs a clear owner, even in shared projects. Avoid shared responsibility for individual flags.

What projects contain

Projects scope these resources:

- Feature flags: Shared across all environments in the project

- Flag templates: Standardized flag configurations

- Release pipelines: Automated release workflows

- Context kinds: Custom context type definitions

- Permissions: Project-scoped roles control access

Coordinating releases across projects

When applications in separate projects need to coordinate releases, use these strategies:

- Request metadata: Pass API version or client metadata in requests for server-side coordination

- Delegated authority: Grant teams permission to manage flags in other projects

To learn more about coordination strategies, read Coordinating releases.

Corner cases

Global flags

Scenario: Many loosely coupled microservices in separate projects need to change behavior based on shared state.

Solution: Pass shared state as custom context attributes. Identify a single source of truth and propagate evaluation results from the owner of the state, rather than duplicating the state across projects.

Onboarding new teams

Scenario: A new team joins and needs to integrate with existing applications.

Process:

- Determine if the new team has dependencies on flags in existing projects

- If dependencies exist, include the team in the shared project

- If no dependencies exist, create a separate project

- Document the coordination strategy if projects are separate

Organizing flags within projects

Use views to organize flags by tags and metadata within projects. This provides flexible grouping without creating additional projects.

To learn more, read Views.

Environments

Key principle: Less is more. Most organizations need only 2-3 LaunchDarkly environments regardless of how many deployment environments they have.

Default recommendation

Start with these environments:

- Development: No approvals, rapid iteration

- Staging: Optional approvals, testing before production

- Production: Required approvals, critical environment

Use targeting rules with custom attributes to handle multiple deployment environments within each LaunchDarkly environment.

Why consolidate environments?

Each LaunchDarkly environment adds operational overhead:

- Maintain targeting rules across multiple environments

- Synchronize flag states manually

- Manage separate SDK credentials

- Review and approve changes in each environment

Consolidate deployment environments using targeting rules instead.

When to create separate environments

| Scenario | Create separate environment? | Solution |

|---|---|---|

| Per developer | No | Use individual user targeting or custom attributes |

| Per PR or branch | No | Pass PR number or branch name as custom attributes |

| Per tenant or customer | No | Use targeting rules with tenant context attributes |

| Per geographic region | No | Pass region as a custom attribute |

| Federal vs public cloud with compliance requirements | Yes | Compliance mandates complete isolation |

| Production vs development with different approval workflows | Yes | Different stakeholders and approval requirements |

| Different data residency requirements | Yes | Legal or regulatory requirements mandate separation |

Solutions for common scenarios

Per-developer testing

Instead of creating developer environments, use:

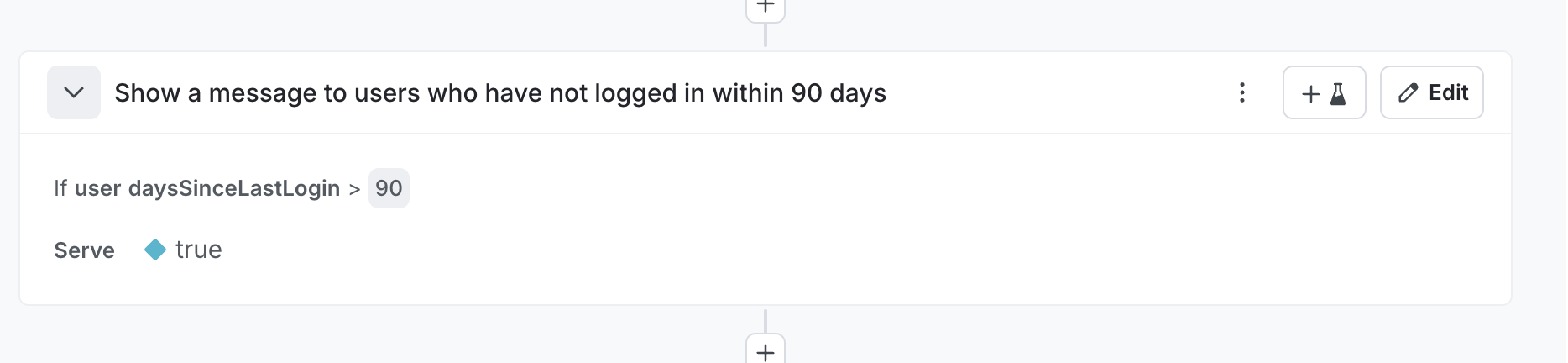

Individual user targeting: Create rules like If user equals "alice@example.com" then serve Available

Custom attributes: Pass developer, workstation, or branch_name as context attributes

Local overrides: Use SDK wrappers or test frameworks to mock flag values

Per-PR or ephemeral environments

Pass deployment metadata as custom attributes:

Context:

- pr_number: "1234"

- hostname: "pr-1234.staging.example.com"

- git_sha: "abc123"

Rule: If pr_number equals "1234" then serve Available

Multi-tenant deployments

Pass tenant information as context attributes:

Context:

- tenant_id: "acme"

- subscription_tier: "enterprise"

Rule: If tenant_id is one of ["acme", "initech"] then serve Available

Mapping process

Follow these steps:

- List deployment environments: Document where your application runs

- Group by compliance and approval requirements: Separate only when compliance mandates it or approval workflows differ significantly

- Define custom attributes: Document attributes needed to distinguish consolidated environments

- Mark production as critical: Enable required approvals and UI warnings

Mark production environments as critical to require approvals and prevent accidental changes. To learn more, read Critical environments.

Real-world examples

Multiple regions → Single production environment

You have: US-East, US-West, EU, APAC production deployments

Create: 1 Production environment

Pass context attribute: region: "eu"

Create rules when needed: If region equals "eu" then serve Available

Federal compliance → Separate environments

You have: Federal and Public clouds with different compliance requirements

Create: 2 environments (Federal, Public)

Why separate: Compliance mandates complete isolation

Within each: Use environment: "dev" attribute to distinguish dev/staging/prod deployments

Team-specific staging → Single staging environment

You have: Staging-TeamA, Staging-TeamB, Staging-TeamC

Create: 1 Staging environment

Pass context attribute: team: "team-a"

Create rules when needed: If team equals "team-a" then serve Available

Flag lifecycle management

Feature flags are temporary by nature unless explicitly designated as permanent. Without a lifecycle process, temporary flags accumulate as technical debt. This page covers how to classify flags, track their status, and clean them up.

Mark temporary vs. permanent at creation

Only temporary flags are subject to stale detection and archive-readiness checks. Classify flags at creation time:

| Temporary | Permanent |

|---|---|

| Release flags | Kill switches |

| Experiment flags | Operational flags |

| Migration flags | Entitlement flags |

Document the cleanup plan up front

Use the flag description to record:

- Purpose and expected behavior of each variation

- Responsible party or team

- Expected removal date

- Side effects or dependencies on other flags or services

Create the cleanup pull request at the same time as the feature pull request. The cleanup PR removes the flag checks and the dead code path, ready to merge once the feature is fully rolled out.

Include flag archival in your definition of done

A feature is not done when it ships. It is done when the temporary flag is archived.

Understand flag status signals

LaunchDarkly tracks flag status per environment:

| Status | Meaning |

|---|---|

| New | Created fewer than 7 days ago, never evaluated |

| Active | Being evaluated with multiple variations, recent configuration changes, or the flag is toggled off |

| Launched | Evaluated in the past 7 days, serving only one variation, no configuration changes in 7 days, and toggled on |

| Inactive | No evaluations for at least 7 days |

Understand cleanup stages

At the project level, LaunchDarkly surfaces two cleanup stages:

Ready for code removal: The flag meets all of these criteria:

- Temporary flag

- “Launched” status in all critical environments

- 30 or more days old

- Not a prerequisite for another flag

Ready to archive: The flag meets all of these criteria:

- Temporary flag

- “Inactive” status in all critical environments

- 30 or more days old

- No code references

- Not a prerequisite for another flag

Set up code references

The ld-find-code-refs utility scans your codebase and reports flag usage back to LaunchDarkly. It is available as a GitHub Action, GitLab CI job, or Docker container.

When all code references to a flag are removed and the scanning tool reruns, LaunchDarkly creates an extinction event confirming removal. A flag with no code references can then meet the criteria for the “Ready to archive” cleanup stage.

Customize lifecycle settings

In Project Settings > Lifecycle settings, configure the criteria for when LaunchDarkly considers a flag ready to archive:

- Minimum flag age

- How long targeting must be unchanged before code removal

- How long evaluations must be absent before archiving

- Whether the flag must not be a prerequisite

- Whether the flag must be serving one variation

- Whether the flag must be temporary

Build cleanup into your workflow

Flag cleanup works best when it is part of the team’s existing process, not a separate initiative. Choose a strategy that fits your team’s cadence:

Attach a cleanup ticket to every feature epic: When a team creates an epic for a flagged feature, add a cleanup ticket to the same epic. The ticket covers removing the flag checks, deleting the dead code path, and archiving the flag in LaunchDarkly. The epic is not closed until the cleanup ticket is done. Use flag links to connect the flag to the ticket in Jira, Trello, or other tracking systems.

Dedicate time for flag cleanup days: Schedule a quarterly cleanup day where teams review the “Needs code removal” and “Ready to archive” lists and work through the backlog. This works well for organizations where cleanup tickets tend to get deprioritized in favor of new work.

Assign cleanup to on-call or rotation: Some teams add flag cleanup to their on-call or bug rotation responsibilities. Engineers on rotation pick up flags from the cleanup list between other tasks.

Schedule regular reviews: Add a recurring calendar event for monthly or quarterly flag reviews. Walk through the “Needs code removal” and “Ready to archive” lists as a team during this session. Use the review to create cleanup tickets, reassign orphaned flags, and track progress against the previous review.

Use the Cleanup shortcut, custom shortcuts and filters

The built-in Cleanup shortcut appears in the left sidenav and surfaces flags that are ready to archive. You can also create additional ones to cover other flag types or combinations. Filter the Flags list by lifecycle stage, maintainer, or tag, then click the bolt icon above the list to save the filtered view as a new shortcut. Other ideas may be shortcuts for “Needs code removal” and “Ready to archive” so the lists are one click away when you need them.

Shortcuts are personal. Only you can see the shortcuts you create.

Use Views to organize flags by team

Views are different from shortcuts. A view is a project-level grouping of flags and segments that is visible to everyone in your account. Use views to organize flags by team or domain, for example “frontend,” “backend,” or “payments.” A flag can belong to more than one view.

Views also support access control. You can restrict access to a view so that only certain members or teams can see and modify the flags linked to it. This lets you scale to thousands of flags in a single project while keeping each team focused on the flags they own.

Use views alongside lifecycle management to assign flag ownership. When a team owns a view, they are responsible for the flags in it, including cleanup.

SDK Preflight Checklist

Audience: Developers who are implementing the LaunchDarkly SDKs

Init and Config

- SDK is initialized once as a singleton early in the application’s lifecycle

- Application does not block indefinitely for initialization

- SDK configuration integrated with existing configuration/secrets management

- Bootstrapping strategy defined and implemented

Client-side SDKs

Browser SDKs

Mobile SDKs

Serverless functions

Using Flags

- Define context kinds and attributes

- Define and document fallback strategy

- Use

variation/variationDetail, notallFlags/allFlagsStatefor evaluation - Flags are evaluated only where a change of behavior is exposed

- The behavior changes are encapsulated and well-scoped

- Subscribe to flag changes

Init and Config

Baseline Recommendations

This table shows baseline recommendations for SDK initialization and configuration:

| Area | Recommendation | Notes |

|---|---|---|

| Client-side init timeout | 100–500 ms | Don’t block UI. Render with fallbacks or bootstrap. |

| Server-side init timeout | 1–5 s | Keep short for startup. Continue with fallback values after timeout. |

| Private attributes | Configured | Redact PII. Consider allAttributesPrivate where appropriate. |

| Javascript SDK Bootstrapping | localStorage | Reuse cached values between sessions. |

SDK is initialized once as a singleton early in the application’s lifecycle

Applies to: All SDKs

Prevent duplicate connections, conserve resources, and ensure consistent caching/telemetry.

Implementation

- MUST Expose exactly one

ldClientper process/tab via a shared module/DI container (root provider in React). - SHOULD Make init idempotent: reuse the existing client if already created.

- SHOULD Close the client cleanly on shutdown. In serverless, create the client outside the handler for container reuse.

- NICE-TO-HAVE Emit a single startup log summarizing effective LD config (redacted).

Validation

- Pass if metrics/inspector show one stream connection per process/tab.

- Pass if event volume and resource usage do not scale with repeated imports/renders.

Application does not block indefinitely for initialization

Applies to: All SDKs

A LaunchDarkly SDK is initialized when it connects to the service and is ready to evaluate flags. If variation is called before initialization, the SDK returns the fallback value you provide. Do not block your app indefinitely while waiting for initialization. The SDK continues connecting in the background. Calls to variation always return the most recent flag value.

Implementation

- MUST Set an initialization timeout

- Client-side: 100–500 ms.

- Server-side: 1–5 s.

- SHOULD Race initialization against a timer if using an SDK that lacks a native timeout parameter.

- MAY Render/serve using bootstrapped or fallback values, then update when flags are ready.

- MAY Subscribe to change events to proactively respond to flag updates.

- MAY Configure a persistent data store to avoid fallback values in the event that the SDK is unable to connect to LaunchDarkly services.

Validation

- Pass if with endpoints blocked the app renders using fallbacks or bootstrapped values within the configured timeout.

- Pass if restoring connectivity updates values without a restart.

How to emulate:

- Point streaming/base/polling URIs to an invalid host

- Block the SDK domains in the container or host running the tests: stream.launchdarkly.com, sdk.launchdarkly.com, clientsdk.launchdarkly.com, app.launchdarkly.com

- In browsers, block

clientstream.launchdarkly.com,clientsdk.launchdarkly.com, and/orapp.launchdarkly.comin DevTools.

For implementation strategies, read the Emulating LaunchDarkly Downtime section in the cookbook.

If you use the Relay Proxy, read Emulating Relay Proxy issues for cold and warm relay test scenarios.

SDK configuration integrated with existing configuration/secrets management

Applies to: All SDKs

Use your existing configuration pipeline so LD settings are centrally managed and consistent across environments. Avoid requiring code changes setting common SDK options.

Implementation

- MUST Load SDK credentials from existing configuration/secrets management system.

- MUST NOT Expose the server-side SDK Key to client applications

- SHOULD Use configuration management system to set common SDK configuration options such as:

- HTTP Proxy settings

- Log verbosity

- Enabling/disabling events in integration testing or load testing environments

- Private attribute

Validation

- Pass if rotating the SDK key in the vault results in successful rollout and the old key is revoked.

- Pass if a repository scan finds no SDK keys or environment IDs committed.

- Pass if startup logs (redacted) show expected config per environment and egress connectivity succeeds with 200/OK or open stream.

Bootstrapping strategy defined and implemented

Applies to: JS Client-Side SDK in browsers, React SDK, Vue SDK

Prevent UI flicker by rendering with known values before the SDK connects and retrieves flags.

Implementation

- SHOULD Enable

bootstrap: 'localStorage'for SPAs/PWAs to reuse cached values between sessions. - SHOULD For SSR or static HTML, embed a server-generated flags JSON and pass to the client SDK at init.

- MUST Document which strategy each app uses and when caches expire.

- SHOULD Reconcile bootstrapped values with live updates and re-render when differences appear.

Validation

- Pass if under offline/slow network the first paint uses bootstrapped values with no visible flash of wrong content.

- Pass if clearing storage falls back to safe defaults and live updates correct the UI on reconnect.

- Pass if evaluations are recorded after successful initialization.

Client-side SDKs

The following items apply to all client-side and mobile SDKs.

Application does not block on identify

Calls to identify return a promise that resolves when flags for the new context have been retrieved. In many applications, using the existing flags is acceptable and preferable to blocking in a situation where flags cannot be retrieved.

Implementation

- MAY Continue without waiting for the promise to resolve

- SHOULD Implement a timeout when identify is called

Validation

- Pass The application is able to function after calling identify while the SDK domains are blocked: clientsdk.launchdarkly.com or app.launchdarkly.com

Application does not rapidly call identify

In mobile and client-side SDKs, identify results in a network call to the evaluation endpoint. Make calls to identify sparingly. For example:

Good times to call identify:

- During a state transition from unauthenticated to authenticated

- When an attribute of a context changes

- When switching users

Bad times to call identify:

- To implement a

currentTimeattribute in your context that updates every second - Implementing contexts that appear multiple times in a page such as per-product

Browser SDKs

The following items apply only to the following SDKs:

- Javascript Client SDK

- React SDK

- Vue SDK

Send events only for variation

Avoid sending spurious events when allFlags is called. Sending evaluation events for allFlags will cause flags to never report as stale and may cause inaccuracies in guarded rollouts and experiments with false impressions.

Implementation

- MUST Set

sendEventsOnlyForVariation: truein the SDK options

Validation

- Pass calls to allFlags do not generate evaluation/summary events

Bootstrapping strategy defined and implemented

Prevent UI flicker by rendering with known values before the SDK connects and retrieves flags. To learn more about bootstrapping, read Bootstrapping.

Implementation

- SHOULD Enable

bootstrap: 'localStorage'or bootstrap from a server-side-SDK

Validation

- Pass if under offline/slow network the first paint uses bootstrapped values with no visible flash of wrong content.

Mobile SDKs

The following items apply to mobile SDKs.

Configure application identifier

The Mobile SDKs automatically capture device and application metadata. To learn more about automatic environment attributes, read Automatic Environment Attributes.

We recommend that you set the application identifier to a different value for each separately distributed software binary.

For example, suppose you have two mobile apps, one for iOS and one for Android. If you set the application identifier to “example-app” and the version to “1.0” in both SDKs, then when you create a flag targeting rule based only on application information, the flag will target both the iOS and Android application. This may not be what you intend.

We recommend using different application identifiers in this situation, for instance, by setting “example-app-ios” and “example-app-android” in your application metadata configuration.

Implementation

- MUST Configure the application identifier in the SDK configuration to a unique value for each platform

You can override the application identifier using the application metadata options when configuring your SDK. To learn how to set a custom application identifier, read Application Metadata.

Validation

- Pass Separate applications appear in the LaunchDarkly dashboard for each platform, for example android, ios, etc.

Examples

Apple iOS/iPadOS/WatchOS

// Fetch the current CFBundleIdentifier

let defaultIdentifier = Bundle.main.object(forInfoDictionaryKey: "CFBundleIdentifier") as? String ?? "UnknownIdentifier"

// Create the ApplicationInfo object

var appInfo = ApplicationInfo()

// Override applicationIdentifier to include the -apple suffix

appInfo.applicationIdentifier("\(defaultIdentifier)-apple")

// Create an LDConfig object and set the applicationInfo property

let ldConfig = LDConfig(mobileKey: "your-mobile-key")

ldConfig.applicationInfo = appInfo

var config = LDConfig(mobileKey: mobileKey, autoEnvAttributes: .enabled)

config.applicationInfo = applicationInfo

Android

import com.launchdarkly.sdk.android.Components;

import com.launchdarkly.sdk.android.integrations.ApplicationInfoBuilder;

import com.launchdarkly.sdk.android.LDConfig;

// Fetch the current package name (application identifier)

String defaultPackageName = context.getPackageName(); // replace 'context' with your Context object

// Create the ApplicationInfoBuilder object

ApplicationInfoBuilder appInfoBuilder = Components.applicationInfo();

// Override applicationIdentifier to include the "-android" suffix

appInfoBuilder.applicationId(defaultPackageName + "-android");

// Build the ApplicationInfo object

ApplicationInfo appInfo = appInfoBuilder.createApplicationInfo();

// Create an LDConfig object and set the applicationInfo property

LDConfig ldConfig = new LDConfig.Builder()

.mobileKey("your-mobile-key")

.applicationInfo(appInfoBuilder) // Pass the ApplicationInfoBuilder here

.build();

Serverless functions

The following applies to SDKs running in serverless environments such as AWS Lambda, Azure Functions, and Google Cloud Functions.

Initialize the SDK outside of the handler

Many serverless environments re-use execution environments for many invocations of the same function. This means that you must initialize the SDK outside of the handler to avoid duplicate connections and resource usage.

Implementation

- MUST Initialize the SDK outside of the function handler

- MUST NOT Close the SDK in the handler

Leverage LD Relay to reduce initialization latency

Serverless functions spawn many instances in order to handle concurrent requests. LD Relay can be deployed in order to reduce outgoing network connections, reduce outbound traffic and reduce initialization latency.

Implementation

- SHOULD Deploy LD Relay in the same region as the serverless function

- SHOULD Configure LD Relay as an event forwarder and configure the SDK’s event URI to point to LD Relay

- SHOULD Configure the SDK in proxy mode or daemon mode instead of connecting directly to LaunchDarkly

- MAY Call flush at the end of invocation to ensure all events are sent

- MAY Call flush/close when the runtime is being permanently terminated in environments that support this signal. Lambda does provide this signal to functions themselves, only extensions.

Consider daemon mode if you have a particularly large initialization payload and only need a couple of flags for the function.

Using Flags

Define context kinds and attributes

Choose context kinds/attributes that enable safe targeting, deterministic rollouts, and cross-service alignment.

Implementation

- MUST Define context kinds, for example

user,organization, ordevice. Use multi-contexts when both person and account matter. - MUST NOT Derive context keys from PII, secrets or other sensitive data.

- MUST Mark sensitive attributes as private. Context Keys cannot be private

- SHOULD Use keys that are unique, opaque, and high-entropy

- SHOULD Document the source/type for all attributes. Normalize formats, for example ISO country codes.

- SHOULD Provide shared mapping utilities to transform domain objects → LaunchDarkly contexts consistently across services.

- SHOULD Avoid targeting on sensitive information or secrets.

Validation

- Pass if a 50/50 rollout yields consistent allocations across services for the same context.

- Pass if sample contexts evaluated in a harness match expected targets/segments.

- Pass if a PII audit finds no PII in keys and private attributes are redacted in events.

- Pass if applications create/define contexts consistently across services

Define and document fallback strategy

Every flag must specify a safe fallback value that is used when the flag is unavailable. For more information on fallback values, read Maintaining fallback values.

Implementation

- MUST Pass the fallback value as the last argument to

variation()/variationDetail()with correct types. - MUST Define a strategy for determining when to audit and update fallback values.

- MUST Implement automated tests to validate the application is able to function in an at most degraded state when flags are unavailable.

Validation

- Pass if blocking SDK network causes the application to use the fallback path safely with no critical errors.

Use variation/variationDetail, not allFlags/allFlagsState for evaluation

Direct evaluation emits accurate usage events required for flag statuses, experiments, and rollout tracking.

Implementation

- MUST Call

variation()/variationDetail()at the decision point - MUST NOT Implement an additional layer of caching for calls to variation that would prevent accurate flag evaluation telemetry from being generated

Validation

- Pass accurate flag evaluation data is shown in the Flag Monitoring dashboard

Flags are evaluated only where a change of behavior is exposed

Generate evaluation events only when a change in behavior is exposed to the end user. This ensures that features such as experimentation and guarded rollouts function correctly.

Implementation

- MUST Evaluate flags only when the value is used

- SHOULD Evaluate flags as close to the decision point as possible

The behavior changes are encapsulated and well-scoped

Isolate new vs. old logic to ease future cleanup. A rule of thumb is never store the result of a boolean flags in a variable. This ensures that the behavior impacted by the flag is fully contained within the branches of the if statement.

Implementation

- SHOULD Place new/old logic in separate functions/components; avoid mixed branches.

- SHOULD Evaluate the flag inside the decision point (

if) to simplify later removal.

// Example: evaluation scoped to the component

export function CheckoutPage() {

if (ldClient.variation('enable-new-checkout', false)) {

return <NewCheckoutComponent />;

}

return <LegacyCheckoutComponent />;

}

Subscribe to flag changes

In applications with a UI or server-side use-cases where you need to respond to a flag change, use the update/change events to update the state of the application.

Implementation

- SHOULD Use the subscription mechanism provided by the SDK to respond to updates. To learn more about subscribing to flag changes, read Subscribing to flag changes.

- SHOULD Unregister temporary handlers to avoid memory leaks.

Validation

- Pass if the application responds to flag changes

Contexts

Contexts are the foundation of feature flag targeting in LaunchDarkly. Understanding how to define and use contexts effectively is critical for successful feature flag implementation.

What are contexts

Contexts represent the entities you want to target with feature flags. A context can be a user, session, device, application, request, or any other entity relevant to your use case.

Each context consists of:

- Key: A unique identifier for the context

- Attributes: Additional data used for targeting and rollouts

Targeting rules evaluate against one or more contexts to determine which variation of a feature flag to serve.

Outcomes

By understanding contexts, you will:

- Know more about contexts, what information to pass, and how to organize it

- Understand the best practices for passing data to the LaunchDarkly SDK

- Be able to successfully pass information to the SDK to be leveraged for feature flags

Topics

This section covers:

- Context fundamentals: Learn about keys, attributes, and meta attributes

- Client vs server SDKs: Understand how context handling differs between SDK types

- Choosing keys and attributes: Best practices for selecting identifiers and attributes

- Automatic attributes: Platform-provided context data

- Best practices: Do’s and don’ts for context implementation

- Context types: Detailed documentation of each context type used in your organization

Context fundamentals

Contexts are composed of three core elements: a unique key, custom attributes, and optional meta attributes.

Key

A string that uniquely identifies a context. The key:

- May represent an individual user, session, device, or any other entity you wish to target

- Must be unique for each context instance

- Cannot be marked as private

- Is used for individual targeting, experimentation, and as the default value for rollouts

Attributes

Each attribute can have one or more values. You can define custom attributes with any additional information you wish to target on.

Supported attribute types

Attributes can contain one or more values of any of these supported types:

- String

- Boolean

- Number

- Semantic Version (string format)

- Date (RFC3339 or Unix Timestamp in milliseconds)

Some operations within rule clauses such as “less than” and “greater than” only support specific types.

Nested attributes

You can target on nested objects in contexts using a JSON path notation. For example, to target on an iOS version within a nested device object, use /os/ios/version as the attribute path.

Meta attributes

Meta attributes are reserved by LaunchDarkly and may have special meaning or usage in the platform.

Key meta attributes

Key meta attributes include:

| Name | Description |

|---|---|

| key | Required. Cannot be private. Used for individual targeting, experimentation and the default value for rollouts |

| anonymous | When true, the context will be hidden from the dashboard and will not appear in autocomplete |

| name | Used in the contexts dashboard and autocomplete search |

| _meta/privateAttributes | List of attribute names whose values will be redacted from events sent to LaunchDarkly |

For a complete list, see Built-in and custom attributes in the LaunchDarkly documentation.

Code examples

Server SDK

In server-side SDKs, pass the context on every variation call:

ldclient.variation("release-widget", context, fallback);

Client SDK

In client-side SDKs, provide context at initialization and update via identify():

const ldclient = LaunchDarkly.initialize(clientId, context);

// Later, update the context

ldclient.identify(newContext);

Usage and billing

Usage is based on the number of unique context keys seen for your primary (most used) context, deduplicated across your entire account.

Limits are not hard lines. Overages do not impact the evaluation of feature flags in your application.

To learn more, read Usage metrics.

Client SDK vs server SDK

Context handling differs significantly between client-side and server-side SDKs due to their different operational models.

Context handling comparison

Client-side SDKs

Client-side SDKs handle one active user or session:

- Provide context at initialization and update via

identify() - SDK fetches variations for that specific context and caches them

- When calling

variation(), no need to provide context anymore - Evaluations happen remotely via an evaluation endpoint

Server-side SDKs

Server-side SDKs evaluate for many users:

- No context required at initialization

- SDK downloads all flag rules at startup

- Pass context on every

variation()call:variation(flag, context, fallback) - SDK calculates variations against locally cached rules

Evaluation flow

Server-side evaluation

Application Request → Create Context → Call variation(flag, context, fallback)

↓

SDK evaluates locally using cached rules

↓

Return variation

Client-side evaluation

Page Load → Initialize SDK with context → SDK fetches user's variations

↓

Cache variations locally

↓

Call variation(flag) // No context needed

When to use each

Use server-side SDKs when

- Evaluating flags for multiple different users or entities

- Running backend services or APIs

- Need to keep flag rules private

- Evaluating flags in high-security contexts

Use client-side SDKs when

- Evaluating flags for a single active session

- Running in browsers or mobile apps

- Need real-time flag updates for the current user

- Implementing user-specific feature rollouts

Updating contexts

Client-side context updates

Use identify() to update the context:

const ldclient = LaunchDarkly.initialize(clientId, context);

// User logs in

ldclient.identify({

kind: "user",

key: "user-123",

name: "Jane Doe",

email: "jane@example.com"

});

The SDK will fetch new variations for the updated context.

Server-side context changes

Simply pass a different context to variation():

// Evaluate for user 1

const variation1 = ldclient.variation("flag-key", userContext1, false);

// Evaluate for user 2

const variation2 = ldclient.variation("flag-key", userContext2, false);

No SDK reconfiguration needed.

Performance considerations

Client-side SDKs

- Initial page load includes SDK initialization time

- Subsequent evaluations are instant (served from cache)

identify()calls require network round-trip- Consider bootstrapping to eliminate initialization delay

Server-side SDKs

- Initialization happens once at application startup

- All evaluations are local and extremely fast

- No per-request network overhead

- Consider persistent stores for daemon mode deployments

Choosing keys and attributes

Selecting appropriate keys and attributes is critical for effective feature flag targeting and progressive rollouts.

Choosing a key

A well-chosen key balances consistency and risk distribution during progressive rollouts.

Key characteristics

Unique: Static 1:1 mapping to a context

Each context instance must have a unique key that always identifies the same entity.

Opaque: Non-sequential, not derived from sensitive information

Keys should not be predictable or contain sensitive data like email addresses or social security numbers.

High cardinality: Many unique values will be seen by the application

Ensure the key space is large enough to support meaningful percentage rollouts. Low cardinality (like “true”/“false”) prevents fine-grained rollout control.

Rollout consistency

The key is used as the default attribute for percentage rollouts. This applies to any attribute you plan on using for rollouts.

Example: Session consistency

To maintain consistency pre and post-login, use a session context instead of a user context for rollouts:

// Session context persists across authentication

const sessionContext = {

kind: "session",

key: generateSessionId(), // UUID stored in session storage

anonymous: true

};

Configure rollouts by session key to ensure users see consistent behavior whether logged in or not.

Defining attributes

Create attributes that support your targeting and rollout use cases.

Do

Create multiple identifiers for different contexts and consistency boundaries

Define separate context kinds for user, session, device, etc., each with appropriate attributes:

const multiContext = {

kind: "multi",

user: {

key: "user-123",

name: "Jane Doe",

createdAt: 1640000000000

},

session: {

key: "session-abc",

anonymous: true,

startedAt: Date.now()

}

};

Create attributes that support targeting and rollout use-cases

Include attributes you’ll actually use for targeting:

const userContext = {

kind: "user",

key: "user-123",

email: "jane@example.com",

plan: "enterprise",

region: "us-west",

betaTester: true

};

Define private attributes when targeting on sensitive information

Mark sensitive attributes as private to prevent them from being sent to LaunchDarkly:

const userContext = {

kind: "user",

key: "user-123",

email: "jane@example.com",

_meta: {

privateAttributes: ["email", "ipAddress"]

}

};

Do not

Use any values derived from PII or sensitive values as keys

Never use email addresses, phone numbers, or other PII directly as keys:

// Bad

const context = { kind: "user", key: "jane@example.com" };

// Good

const context = {

kind: "user",

key: "user-123",

email: "jane@example.com",

_meta: { privateAttributes: ["email"] }

};

Rapidly change attributes in client-side SDKs

Avoid using current timestamp or frequently changing values as attributes:

// Bad - causes excessive events

const context = {

kind: "user",

key: "user-123",

lastActivity: Date.now() // Changes every render

};

// Good - use stable attributes

const context = {

kind: "user",

key: "user-123",

sessionStart: sessionStartTime // Stable for session

};

Mix value types or sources for an attribute within the same project

Keep attribute types consistent across your codebase:

// Bad - inconsistent types

// iOS app sends: { plan: "enterprise" }

// Web app sends: { plan: 3 }

// Good - consistent types

// All apps send: { plan: "enterprise" }

Attribute naming conventions

Follow these conventions for consistency:

- Use camelCase for attribute names:

userId,planType,isActivated - Use clear, descriptive names: prefer

accountCreationDateoveracd - Prefix boolean attributes with

is,has, orcan:isActive,hasAccess,canEdit - Use standard date formats: RFC3339 strings or Unix timestamps in milliseconds

Multi-kind contexts

For complex targeting scenarios, use multi-kind contexts to evaluate against multiple entities simultaneously:

const multiContext = {

kind: "multi",

user: {

key: "user-123",

email: "jane@example.com",

plan: "enterprise"

},

organization: {

key: "org-456",

name: "Acme Corp",

industry: "technology"

},

device: {

key: "device-789",

platform: "iOS",

model: "iPhone 14"

}

};

This allows targeting rules like “serve to users in enterprise plan OR organizations in technology industry”.

Automatic environment attributes

Some SDKs automatically collect environment metadata and make it available as context attributes. This reduces boilerplate and provides consistent targeting capabilities.

ld_application

Automatically collected application metadata available in mobile and client-side SDKs.

Attributes

The ld_application context includes these attributes:

| Name | Description |

|---|---|

| key | Automatically generated by the SDK |

| id | Bundle Identifier |

| locale | Locale of the device, in IETF BCP 47 Language Tag format |

| name | Human-friendly name of the application |

| version | Version of the application used for update comparison |

| versionName | Human-friendly name of the version |

Use cases

Disable features on known bad builds

Target specific application versions to disable features on buggy releases:

IF ld_application version is one of 1.2.3, 1.2.4

THEN serve "Off"

This is valuable for mobile applications or heavily cached SPAs. Users may not update immediately.

Application-level configuration and customization

Serve different configurations based on application bundle ID or locale:

IF ld_application locale is one of es, es-MX, es-ES

THEN serve spanish-config

Determine when to sunset legacy behavior

Export context metrics to understand what application versions are still in use:

IF ld_application version < 2.0.0

THEN serve legacy-behavior

ELSE serve new-behavior

Use LaunchDarkly’s Data Export to analyze version distribution. This helps you decide when to drop support for older versions.

ld_device

Information about the platform, operating system, and device automatically collected by mobile SDKs.

Attributes

The ld_device context includes these attributes:

| Name | Description |

|---|---|

| key | Automatically generated by the SDK |

| manufacturer | Manufacturer of the device (Apple, Samsung, etc.) |

| model | Model of the device (iPhone, iPad, Galaxy S21) |

| /os | Operating system of the device. Includes properties for family, name, and version |

Use cases

Roll out by platform to reduce platform-specific issues

Start rollouts on platforms where you have stronger test coverage:

Rollout 10% by ld_device key

IF ld_device /os/family is iOS

Release to tier 1 supported platforms before testing on lower tiers

Prioritize your primary platforms:

IF ld_device manufacturer is one of Apple, Samsung

THEN serve 20% rollout

ELSE serve 5% rollout

Platform-specific feature or hardware targeting

Target features that require specific hardware capabilities:

IF ld_device model is one of iPhone 14, iPhone 15

AND custom-attribute has-nfc is true

THEN serve nfc-payment-feature

Operating system targeting

Access nested OS information using JSON paths:

IF ld_device /os/name is Android

AND ld_device /os/version >= 13

THEN serve android-13-features

To learn more, read the LaunchDarkly documentation on automatic environment attributes.

Best practices

Guidelines for implementing contexts effectively and avoiding common pitfalls.

Context design

Do

Create attributes that support targeting and rollout use cases

Only add attributes you’ll actually use for targeting or analytics:

// Good - actionable attributes

const context = {

kind: "user",

key: "user-123",

plan: "enterprise", // For entitlement targeting

region: "us-west", // For regional rollouts

betaTester: true // For beta feature access

};

// Bad - unused attributes

const context = {

kind: "user",

key: "user-123",

favoriteColor: "blue", // Not used for targeting

shoeSize: 10 // Not used for targeting

};

Create multiple identifiers for different contexts and consistency boundaries

Define separate contexts for user, session, device, etc.:

const multiContext = {

kind: "multi",

user: {

key: "user-123",

plan: "enterprise"

},

session: {

key: "session-abc",

anonymous: true

},

device: {

key: "device-789",

platform: "iOS"

}

};

Define private attributes when targeting on sensitive information

Mark any PII or sensitive data as private:

const context = {

kind: "user",

key: "user-123",

email: "jane@example.com",

ipAddress: "192.168.1.1",

_meta: {

privateAttributes: ["email", "ipAddress"]

}

};

Do not

Use values derived from PII or sensitive values as keys

Never use email, phone numbers, or other PII directly as keys:

// Bad - PII as key

const context = { kind: "user", key: "jane@example.com" };

// Good - opaque key, PII as private attribute

const context = {

kind: "user",

key: "user-123",

email: "jane@example.com",

_meta: { privateAttributes: ["email"] }

};

Rapidly change attributes in client-side SDKs

Avoid timestamp or frequently changing attributes:

// Bad - changes every render

const context = {

kind: "user",

key: "user-123",

currentTime: Date.now()

};

// Good - stable attributes

const context = {

kind: "user",

key: "user-123",

sessionStartTime: sessionStart

};

Mix value types or sources for an attribute within the same project

Keep attribute types consistent:

// Bad - inconsistent types across applications

// iOS: { accountType: "premium" }

// Web: { accountType: 1 }

// Good - consistent types

// All apps: { accountType: "premium" }

Flag evaluation

Do

Call variation/variationDetail where the flag will be used

Evaluate flags at the point of use:

// Good - evaluate where needed

function renderButton() {

const showNewButton = ldClient.variation("new-button-ui", context, false);

return showNewButton ? <NewButton /> : <OldButton />;

}

// Bad - evaluate unnecessarily

function loadPage() {

const allFlags = ldClient.allFlags(); // Evaluates all flags

// Only use one flag

return allFlags['new-button-ui'];

}

Maintain fallback values that allow the application to function

Choose safe fallback values:

// Good - safe fallbacks

const maxRetries = ldClient.variation("max-retries", context, 3);

const featureEnabled = ldClient.variation("new-feature", context, false);

// Bad - no fallback or unsafe fallback

const maxRetries = ldClient.variation("max-retries", context); // undefined

const criticalFeature = ldClient.variation("payment-enabled", context, true); // unsafe default

Write code with cleanup in mind

Minimize flag usage to simplify cleanup:

// Good - single evaluation point

function PaymentForm() {

const useNewPaymentFlow = ldClient.variation("new-payment-flow", context, false);

return useNewPaymentFlow ? <NewPaymentForm /> : <OldPaymentForm />;

}

// Bad - multiple evaluation points

function PaymentForm() {

if (ldClient.variation("new-payment-flow", context, false)) {

// New flow code

}

const buttonText = ldClient.variation("new-payment-flow", context, false)

? "Pay Now"

: "Submit Payment";

// More evaluations...

}

Do not

Use allFlags/allFlagsState for use cases other than passing values to another application

Only use allFlags when absolutely necessary:

// Bad - unnecessary allFlags call

const flags = ldClient.allFlags();

if (flags['feature-x']) {

// Use feature

}

// Good - targeted evaluation

if (ldClient.variation("feature-x", context, false)) {

// Use feature

}

// Good - passing to another application

const flagState = ldClient.allFlagsState(context);

bootstrapFrontend(flagState);

Call variation/variationDetail without using the flag value

Don’t evaluate flags you won’t use:

// Bad - unused evaluation

ldClient.variation("feature-flag", context, false);

// Flag value never used

// Good - use the value

const enabled = ldClient.variation("feature-flag", context, false);

if (enabled) {

enableFeature();

}

Use flags without a plan

Have a clear purpose and cleanup plan:

// Bad - unclear purpose

const flag1 = ldClient.variation("temp-flag", context, false);

const flag2 = ldClient.variation("test-something", context, false);

// Good - clear purpose and naming

const useNewCheckoutFlow = ldClient.variation(

"checkout-flow-v2-rollout", // Clear name

context,

false // Safe fallback to old flow

);

// TODO: Remove this flag after 100% rollout - JIRA-123

Code references

Add ld-find-code-refs to your CI pipeline to track flag usage:

# .github/workflows/launchdarkly-code-refs.yml

name: LaunchDarkly Code References

on: push

jobs:

find-code-refs:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: LaunchDarkly Code References

uses: launchdarkly/find-code-references@v2

with:

project-key: my-project

access-token: ${{ secrets.LD_ACCESS_TOKEN }}

Code references enable you to:

- Identify where flags are used in your codebase

- Understand the impact before making flag changes

- Find and remove flags when they’re no longer needed

- Manage technical debt associated with feature flags

Flag planning

Make flag planning part of feature design. Before creating a flag, ask:

- Is this flag temporary or permanent?

- Who is responsible for maintaining the targeting rules in each environment?

- How will this flag be targeted? (By user? By session? By organization?)

- Does this feature have any dependencies? (Other flags? External services?)

- What is the cleanup plan? (When can this flag be removed?)

Minimize flag reach

Use flags in as few places as possible:

// Good - single evaluation point

function App() {

const useNewUI = ldClient.variation("new-ui", context, false);

return <AppLayout newUI={useNewUI} />;

}

// Bad - evaluated throughout the application

function Header() {

if (ldClient.variation("new-ui", context, false)) { /* ... */ }

}

function Sidebar() {

if (ldClient.variation("new-ui", context, false)) { /* ... */ }

}

function Footer() {

if (ldClient.variation("new-ui", context, false)) { /* ... */ }

}

A flag should have a single, well-defined scope. For larger features, consider breaking them into multiple flags using prerequisites.

Failure mode resilience

Connection loss

If the connection to LaunchDarkly is lost:

Client-side SDKs: Context cannot be updated via identify() until connectivity is re-established. Values from the in-memory cache will be served.

Server-side SDKs: Evaluation for any context can still take place using the in-memory feature store.

Initialization failure

If the SDK is unable to initialize:

Both SDK types: Locally cached values will be served if available, otherwise fallback values will be used.

Always provide sensible fallback values that allow your application to function:

// Good - safe degradation

const featureEnabled = ldClient.variation("new-feature", context, false);

// Bad - application breaks if SDK fails

const criticalConfig = ldClient.variation("api-endpoint", context); // undefined

Context types

This section documents the context types used in your organization. Each context type represents a different entity you can target with feature flags.

Available context types

Session

Unauthenticated user sessions that maintain consistency pre and post-login. Use for anonymous user tracking and experimentation.

User

Authenticated users with consistent experience across devices. Use for user-level entitlements and cross-device feature rollouts.

Build

Build metadata for version-aware feature management across applications and services. Use for build-specific targeting and microservice coordination.

Browser

Browser and platform detection for web applications. Use for browser-specific rollouts and progressive enhancement.

Request

Request or transaction-specific metadata for API services. Use for API versioning and endpoint-specific behavior.

Multi-kind contexts

You can combine multiple context types in a single evaluation for sophisticated targeting:

const multiContext = {

kind: "multi",

session: {

key: sessionId,

anonymous: true

},

user: {

key: userId,

name: userName

},

build: {

key: "api-orders-2.5.0",

version: "2.5.0"

}

};

This enables targeting rules like “roll out to 20% of sessions OR all enterprise users on build version 2.5.0+”.

Adding custom context types

To document a new context type for your organization:

- Create a new markdown file in this directory named after your context kind

- Follow the table structure from existing context types

- Include implementation examples

- Document specific use cases for your context

- Add the new context type to the navigation in

SUMMARY.md

Session

Unauthenticated user sessions that maintain consistency pre and post-login.

Session contexts use these attributes:

| Attribute | Type | Source | Example | Private | Notes |

|---|---|---|---|---|---|

| key | String | Local/Session Storage | 7D97F0D3-B18C-4305-B110-0317BDB745DC | FALSE | Random UUID stored in session-bound storage |

| anonymous | Boolean | Static: true | true | FALSE | Always true for session contexts |

| startedAt | Number | Current time at session creation | 1640995200000 | FALSE | Unix timestamp in milliseconds |

Implementation example

Implement a session context like this:

const sessionContext = {

kind: "session",

key: getOrCreateSessionId(),

anonymous: true,

startedAt: Date.now()

};

function getOrCreateSessionId() {

let sessionId = sessionStorage.getItem('ld-session-id');

if (!sessionId) {

sessionId = crypto.randomUUID();

sessionStorage.setItem('ld-session-id', sessionId);

}

return sessionId;

}

Use cases

Progressive release for unauthenticated users

Use this when you roll out features to users before they log in. This ensures anonymous visitors see new features without authentication.

Maintain consistency across authentication

Use this when features should remain consistent before and after login. For example, a user sees a new checkout flow while browsing anonymously. They continue to see it after logging in during the same session.

Kill switch for resource-intensive features

Use this when you disable expensive features for unauthenticated users. For example, disable AI-powered recommendations or real-time chat for anonymous sessions. This reduces infrastructure costs.

Experimentation for pre-authentication paths

Use this when you run experiments on registration flows, shopping cart behavior, or add-to-cart functionality. Rolling out by session ensures consistent experience throughout the visitor’s journey.

User

Authenticated users with consistent experience across devices.

User contexts use these attributes:

| Attribute | Type | Source | Example | Private | Notes |

|---|---|---|---|---|---|

| key | String | User Database | 7D97F0D3-B18C-4305-B110-0317BDB745DC | FALSE | User identifier from database (UUID) |

| anonymous | Boolean | Static: false | false | FALSE | Always false for authenticated users |

| name | String | User Profile | Jane Doe | FALSE | User’s first and last name |

| String | User Profile | jane@example.com | TRUE | User’s email address | |

| createdAt | Number | User Database | 1640995200000 | FALSE | Unix timestamp in milliseconds |

Implementation example

Implement a user context like this:

const userContext = {

kind: "user",

key: currentUser.id,

anonymous: false,

name: `${currentUser.firstName} ${currentUser.lastName}`,

email: currentUser.email,

createdAt: currentUser.createdAt,

_meta: {

privateAttributes: ["email"]

}

};

Use cases

Progressive release for features consistent across devices

Use this when you roll out features that work the same way on web, mobile, and tablet. For example, a new user profile layout or account settings feature.

User-level entitlements and overrides

Use this when you enable features for specific users or user groups. For example, beta features for internal employees or premium features for paying customers.

Experimentation for post-authentication activities

Use this when you run experiments on authenticated features like account management, saved preferences, or personalized recommendations. This ensures consistent experience across sessions and devices.

Enable legacy behavior based on user creation date

Use this when you maintain backward compatibility for existing users. For example, grandfather users created before a certain date into the old pricing model or feature set.

Build

Build metadata for version-aware feature management across applications and services.

Build contexts use these attributes:

| Attribute | Type | Source | Example | Private | Notes |

|---|---|---|---|---|---|

| key | String | Composite | api-orders-2.5.0 | FALSE | Service/App ID + Version |

| id | String | Build Configuration | api-orders | FALSE | Unique identifier for the service/app |

| name | String | Build Configuration | Order API | FALSE | Human-friendly name |

| version | String | Build Metadata | 2.5.0 | FALSE | Build version (semantic version) |

| versionName | String | Build Metadata | 2.5 | FALSE | Human-friendly version name |

| buildDate | Number | Build Metadata | 1640995200000 | FALSE | Unix timestamp when build was created |

| commit | String | Git Metadata | a1b2c3d | FALSE | Git commit SHA (short or full) |

Implementation example

Implement a build context like this:

const buildContext = {

kind: "build",

key: `${SERVICE_ID}-${VERSION}`,

id: process.env.SERVICE_ID,

name: "Order API",

version: process.env.VERSION,

versionName: "2.5",

buildDate: parseInt(process.env.BUILD_TIMESTAMP),

commit: process.env.GIT_COMMIT

};

Use cases

Disable features on known bad builds

Use this when you quickly disable features on buggy releases. For example, version 2.5.0 has a critical bug. Disable the problematic feature only for that version while users update.

Application-level configuration and customization

Use this when different applications in your ecosystem need different feature sets. For example, enable advanced analytics in the admin portal. Do not enable it in the customer-facing app.

Export metrics to determine when to sunset legacy behavior

Use this when you plan to remove old code paths. Export context metrics to understand what application versions are still in use. This helps you decide when to drop support for older versions.

Coordinate microservice deployments

Use this when you roll out features that require specific service versions. For example, enable a new API endpoint only when both frontend and backend deploy compatible versions.

Target by git commit

Use this when you enable or disable features for specific code commits. For example, a regression occurs in commit a1b2c3d. Disable the problematic feature only for that commit while you prepare a fix.

Browser

Browser and platform detection for web applications.

Browser contexts use these attributes:

| Attribute | Type | Source | Example | Private | Notes |

|---|---|---|---|---|---|

| key | String | Composite | chrome-120.0.6099.109 | FALSE | Browser Identifier + Version String |

| userAgent | String | Navigator API | Mozilla/5.0… | FALSE | Browser’s user-agent string |

| appName | String | Browser Detection | Chrome | FALSE | Browser app name (Firefox, Safari, Chrome) |

| /app/firefox/version | String | Browser Detection | 121.0 | FALSE | Firefox version |

| /app/chrome/version | String | Browser Detection | 120.0.6099.109 | FALSE | Chrome version |

| /app/safari/version | String | Browser Detection | 17.2 | FALSE | Safari version |

| /locale/tag | String | Navigator API | en | FALSE | Language code (en, es, de) |

Implementation example

Implement a browser context like this:

const browserContext = {

kind: "browser",

key: `${browserName}-${browserVersion}`,

userAgent: navigator.userAgent,

appName: browserName,

app: {

[browserName.toLowerCase()]: {

version: browserVersion

}

},

locale: {

tag: navigator.language.split('-')[0]

}

};

Use cases

Roll out by browser to reduce browser-specific issues

Use this when you start a rollout on browsers where you have strong test coverage. For example, roll out to Chrome at 20% while Safari remains at 5% until you verify compatibility.

Progressive enhancement based on browser capabilities

Use this when you enable features that require modern browser APIs. For example, enable WebGL-based visualizations only in browsers that support it. You can also use Service Workers only in compatible browsers.

Browser version targeting

Use this when your features require minimum browser versions. For example, enable features that use CSS Grid only on browsers with full Grid support. Use modern JavaScript features only on browsers with ES2020+ support.

Localization testing

Use this when you roll out internationalization features. For example, enable new translations for users with specific locale settings. You can also test RTL layout for Arabic or Hebrew language users.

Request

Request or transaction-specific metadata for API services.

Request contexts use these attributes:

| Attribute | Type | Source | Example | Private | Notes |

|---|---|---|---|---|---|

| key | String | Generated UUID | 7D97F0D3-B18C-4305-B110-0317BDB745DC | FALSE | Random UUID per request |

| anonymous | Boolean | Static: true | true | FALSE | Always true for request contexts |

| path | String | HTTP Request | /api/users | FALSE | HTTP request path |

| method | String | HTTP Request | POST | FALSE | HTTP request method |

| api-version | String | HTTP Header | v2 | FALSE | API version from X-API-Version header |

| request-client | String | HTTP Header | mobile-ios | FALSE | Name of the requesting client application |

Implementation example

Implement a request context like this:

const requestContext = {

kind: "request",

key: crypto.randomUUID(),

anonymous: true,

path: req.path,

method: req.method,

"api-version": req.headers['x-api-version'],

"request-client": req.headers['x-client-name']

};

Use cases

Coordinate breaking changes across projects

Use this when you manage API versioning with feature flags. For example, serve new response formats only to requests specifying api-version: v2 or higher. This allows gradual migration.

Distribute risk by request or transaction

Use this when you roll out new behavior for specific endpoints. For example, enable new validation logic on the /api/orders endpoint at 10% while other endpoints remain unchanged.

Request-level rate limiting or special handling

Use this when you apply different behavior to specific request types. For example, enable aggressive caching only for GET requests. You can also apply stricter validation for POST/PUT/DELETE operations.

Client-specific behavior

Use this when you serve different responses based on the client. For example, return simplified responses for mobile clients to reduce bandwidth. You can also enable experimental features for internal testing tools.

Observability

Observability enables you to monitor and understand SDK behavior across your applications. Effective observability helps you identify issues, track usage patterns, and maintain healthy SDK implementations.

What is SDK observability

SDK observability provides visibility into how your applications interact with LaunchDarkly. This includes tracking SDK versions in use, monitoring connection health, and understanding evaluation patterns.

Key observability capabilities:

- Version tracking: Monitor which SDK versions are deployed across environments

- Connection monitoring: Track SDK connectivity and relay proxy usage

- End-of-life awareness: Identify SDKs approaching or past their support lifecycle

- Performance insights: Understand SDK behavior and resource usage

Outcomes

By implementing SDK observability, you will:

- Maintain up-to-date SDK versions across your organization

- Identify security risks from outdated SDKs

- Reduce support incidents through proactive monitoring

- Make informed decisions about SDK upgrades and migrations

Topics

This section covers:

- SDK version tracking: Use the SDK versions API to monitor deployed versions and identify upgrade needs

SDK version tracking

Track which SDK versions are deployed across your projects and environments to maintain security, compatibility, and access to the latest features.

Why track SDK versions

Tracking SDK versions helps you:

- Identify outdated SDKs that may have security vulnerabilities

- Understand which SDKs are approaching end-of-life

- Plan SDK upgrade initiatives across your organization

- Monitor relay proxy versions alongside client SDKs

- Maintain compliance with security policies

SDK versions API endpoint

LaunchDarkly provides a dedicated API endpoint to retrieve detailed SDK version usage data. The endpoint returns one record per unique SDK and project/environment combination, representing the maximum version observed in the last day.